Even Security People Need Security Training Now

What you missed today

Good evening.

Your developers are vibe coding with AI tools, attackers are impersonating Palo Alto recruiters to hit security professionals specifically, and premium AI accounts are now sold underground like VPN access.

Here are the 5 things you missed today:

1. 🎭 Attackers Are Impersonating Palo Alto Networks Recruiters to Scam Security Pros

The campaign runs as a two-stage social engineering operation: a fake “recruiter” contacts targets via email, then manufactures a bureaucratic crisis around ATS CV requirements to create urgency. A second “expert” offers to fix the CV for a fee, within an artificial time window tied to a fake review panel. Targets are pulled from LinkedIn using scraped data, and the lures are highly personalized to the individual’s role and seniority. Security professionals are the explicit target population here, which is the uncomfortable detail. The people being social engineered are the ones who usually teach others to spot it.

2. 🪪 Identity Is Now the Primary Attack Surface, Per Microsoft

Microsoft’s research shows 32% of organizations have duplicative access management solutions, and 40% say they have too many identity vendors. That fragmentation means risk is distributed across dozens of disconnected accounts and permissions, creating lateral movement conditions that are hard to detect. The RSAC announcement includes a new unified identity risk score correlating 100 trillion signals, automatic attack disruption that terminates sessions mid-attack, and a Security Copilot triage agent extended to identity workflows. Worth reading if identity governance is on your roadmap.

3. 🤖 Vibe Coding Is Now Your Attack Surface

81% of developers are using AI for development. In the most extreme cases, “citizen developers” with no security experience are using agents to build and deploy software without any vetting. Tenable’s guide covers the 5 main AI coding use cases and the specific risks each introduces: insecure code completion, hallucinated libraries that turn into typosquatting attacks, IaC generation with overly permissive defaults, and agentic pipelines with no human in the loop. The key governance question: if AI is helping your team write code 20% faster, did your security scanning capacity increase by 20% to match?

4. ⚔️ The Kill Chain Is Obsolete When the Attacker Is an AI Agent

In September 2025, Anthropic disclosed that a state-sponsored threat actor used an AI coding agent to execute an autonomous cyber espionage campaign against 30 global targets. The AI handled 80–90% of tactical operations on its own: reconnaissance, writing exploit code, and attempting lateral movement at machine speed. Detection and response tooling built around human-paced attack timelines needs rethinking.

5. 💳 Your Employees’ AI Accounts Are Being Sold Underground

Flare’s research found that premium AI accounts for ChatGPT, Claude, Copilot, and Perplexity are now traded on underground markets alongside email accounts and developer tools. Listings are product-like in language, bundled with “full API access” or “no limits” claims, accessible to buyers without technical expertise. The risk isn’t just account abuse. Employees using compromised AI accounts may be feeding internal documents, code, and research into sessions that are fully visible to the threat actor who sold the access.

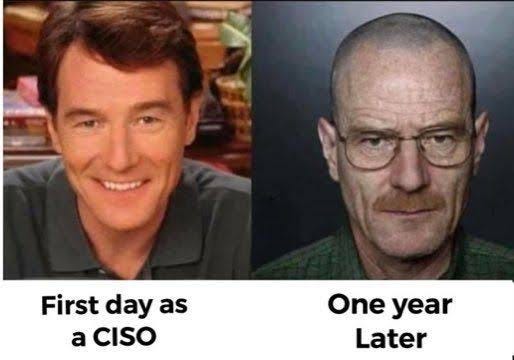

Bonus. CISO Tip of the Day

Stories 3, 4, and 5 are pointing at the same problem from different angles: AI tools in your environment are a new class of identity and data risk. Your AI acceptable use policy needs to answer three questions before next quarter: which tools are approved, what data can be shared with them, and who owns the account credentials and how are they monitored

- Alex